AWS Certified SysOps Administrator - Associate Questions and Answers (Dumps and Practice Questions)

Question : You run a web application where web servers on EC instances are in an Auto Scaling group Monitoring over the last months shows that web servers are necessary to

handle the minimum load. During the day up to 12 servers are needed. Five to six days per year, the number of web servers required might go up to 15.

What would you recommend to minimize costs while being able to provide high availability?

1. 6 Reserved instances (heavy utilization). 6 Reserved instances {medium utilization), rest covered by On-Demand instances

2. 6 Reserved instances (heavy utilization). 6 On-Demand instances, rest covered by Spot Instances

3. Access Mostly Uused Products by 50000+ Subscribers

4. 6 Reserved instances (heavy utilization) 6 Reserved instances (medium utilization) rest covered by Spot instances

Correct Answer : Get Lastest Questions and Answer :

Explanation: In the exam world just remember spot instances can be terminated at anytime if the price of them goes over your bid. They should only be used with flexible applications, the

key word here is high availability. Potentially have your instance terminated at any point without your intervention does not equal HA

I would go with A

Question : You have been asked to propose a multi-region deployment of a web-facing application where a controlled portion of your traffic is being processed by an alternate

region. Which configuration would achieve that goal?

1. Route53 record sets with weighted routing policy

2. Route53 record sets with latency based routing policy

3. Access Mostly Uused Products by 50000+ Subscribers

4. Elastic Load Balancing with health checks enabled

Correct Answer : Get Lastest Questions and Answer :

Explanation: When you create a resource record set, you choose a routing policy, which determines how Amazon Route 53 responds to queries:

Simple Routing Policy : Use a simple routing policy when you have a single resource that performs a given function for your domain, for example, one web server that serves content

for the example.com website. In this case, Amazon Route 53 responds to DNS queries based only on the values in the resource record set, for example, the IP address in an A record.

Weighted Routing Policy : Use the weighted routing policy when you have multiple resources that perform the same function (for example, web servers that serve the same website) and

you want Amazon Route 53 to route traffic to those resources in proportions that you specify (for example, one quarter to one server and three quarters to the other). For more

information about weighted resource record sets, see Weighted Routing.

Latency Routing Policy : Use the latency routing policy when you have resources in multiple Amazon EC2 data centers that perform the same function and you want Amazon Route 53 to

respond to DNS queries with the resources that provide the best latency. For example, you might have web servers for example.com in the Amazon EC2 data centers in Ireland and in

Tokyo. When a user browses to example.com, Amazon Route 53 chooses to respond to the DNS query based on which data center gives your user the lowest latency. For more information

about latency resource record sets, see Latency-Based Routing.

Question : You have set up Individual AWS accounts for each project. You have been asked to make sure your AWS Infrastructure costs do not exceed the budget set per project for

each month. Which of the following approaches can help ensure that you do not exceed the budget each month?

1. Consolidate your accounts so you have a single bill for all accounts and projects

2. Set up auto scaling with CloudWatch alarms using SNS to notify you when you are running too

many Instances in a given account

3. Access Mostly Uused Products by 50000+ Subscribers

occurring when the amount for each resource tagged to a particular project matches the budget

allocated to the project.

4. Set up CloudWatch billing alerts for all AWS resources used by each account, with email notifications when it hits 50%. 80% and 90% of its budgeted monthly spend

Correct Answer : Get Lastest Questions and Answer :

Explanation: Budget is set for each project. However, billing is account and resource specific. Option 4 is valid as notification for each account and each resource.

3 would inform you about the resources when the budget is reached, but D would warn you well in advance, in fact 3 reminders, so that you can take preventive action, BEFORE the

budget is reached.

Related Questions

Question :

What would you need to edit to allow connections from your remove IP to your database instance?

1. VPC Network

2. Options Group

3. Access Mostly Uused Products by 50000+ Subscribers

4. Security Group

Question :

RDS allows you to restore a database engine to a "point in time" (InnoDB for MySQL).

1. True

2. False

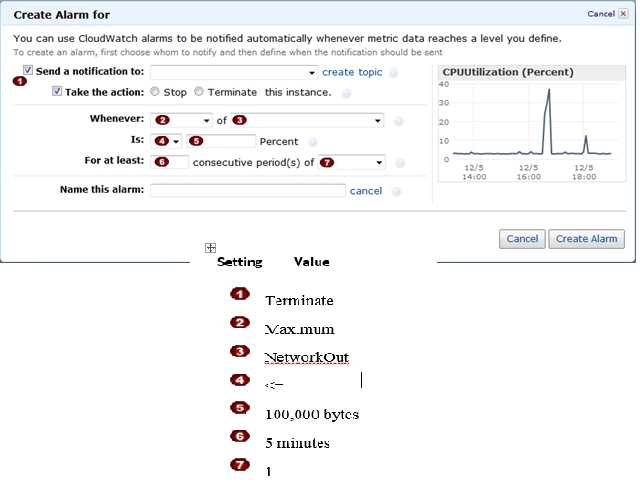

Question : In the Amazon Elastic Compute Cloud (Amazon EC) console

you have setup the Alarm as given setting, what would it implies

1. Create an alarm that terminates an instance that runs BigData MapReduce when it is no longer sending results data.

2. Create an alarm that stop an instance that runs BigData MapReduce when it is no longer sending results data.

3. Access Mostly Uused Products by 50000+ Subscribers

Question : A user is planning to schedule a backup for an EBS volume. The user wants security of the snapshot data. How can the user achieve data

encryption with a snapshot?

1. Use encrypted EBS volumes so that the snapshot will be encrypted by AWS

2. While creating a snapshot select the snapshot with encryption

3. Access Mostly Uused Products by 50000+ Subscribers

4. Enable server side encryption for the snapshot using S3

Question : A user is planning to use AWS services for his web application. If the user is trying to set up his own billing management system for AWS, how

can he configure it?

1. Set up programmatic billing access. Download and parse the bill as per the requirement

2. It is not possible for the user to create his own billing management service with AWS

3. Access Mostly Uused Products by 50000+ Subscribers

4. Use AWS billing APIs to download the usage report of each service from the AWS billing console

Question : A user has created a public subnet with VPC and launched an EC instance within it. The user is trying to

delete the subnet. What will happen in this scenario?

1. It will delete the subnet and make the EC2 instance as a part of the default subnet

2. It will not allow the user to delete the subnet until the instances are terminated

3. Access Mostly Uused Products by 50000+ Subscribers

4. The subnet can never be deleted independently, but the user has to delete the VPC first

Ans: 2

Exp : A Virtual Private Cloud (VPC. is a virtual network dedicated to the user's AWS account. A user can create a subnet with VPC and launch instances

inside that subnet. When an instance is launched it will have a network interface attached with it. The user cannot delete the subnet until he

terminates the instance and deletes the network interface.

Question : A user has setup an EBS backed instance and attached EBS volumes to it. The user has setup a

CloudWatch alarm on each volume for the disk data. The user has stopped the EC2 instance and detached the EBS volumes. What will be the

status of the alarms on the EBS volume?

1. OK

2. Insufficient Data

3. Access Mostly Uused Products by 50000+ Subscribers

4. The EBS cannot be detached until all the alarms are removed

Ans : 2

Exp : Amazon CloudWatch alarm watches a single metric over a time period that the user specifies and performs one or more actions based on the

value of the metric relative to a given threshold over a number of time periods. Alarms invoke actions only for sustained state changes. There are

three states of the alarm: OK, Alarm and Insufficient data. In this case since the EBS is detached and inactive the state will be Insufficient.

Question : A user has launched an EC instance from an instance store backed AMI. The infrastructure team wants to create an AMI from the running

instance. Which of the below mentioned credentials is not required while creating the AMI?

1. AWS account ID

2. X.509 certificate and private key

3. Access Mostly Uused Products by 50000+ Subscribers

4. Access key and secret access key

Ans : 3

Exp : When the user has launched an EC2 instance from an instance store backed AMI and the admin team wants to create an AMI from it, the user

needs to setup the AWS AMI or the API tools first. Once the tool is setup the user will need the following credentials:

AWS account ID;

AWS access and secret access key;

X.509 certificate with private key.

Question : A user has configured an SSL listener at ELB as well as on the back-end instances. Which of the below

mentioned statements helps the user understand ELB traffic handling with respect to the SSL listener?

1. It is not possible to have the SSL listener both at ELB and back-end instances

2. ELB will modify headers to add requestor details

3. Access Mostly Uused Products by 50000+ Subscribers

4. ELB will not modify the headers

Ans : 4

Exp : When the user has configured Transmission Control Protocol (TCP. or Secure Sockets Layer (SSL. for both front-end and back-end connections

of the Elastic Load Balancer, the load balancer forwards the request to the back-end instances without modifying the request headers unless the

proxy header is enabled. SSL does not support sticky sessions. If the user has enabled a proxy protocol it adds the source and destination IP to

the header.

Question : A user has created a Cloudformation stack. The stack creates AWS services, such as EC instances, ELB, AutoScaling, and RDS. While creating

the stack it created EC2, ELB and AutoScaling but failed to create RDS. What will Cloudformation do in this scenario?

1. Cloudformation can never throw an error after launching a few services since it verifies all the steps

before launching

2. It will warn the user about the error and ask the user to manually create RDS

3. Access Mostly Uused Products by 50000+ Subscribers

4. It will wait for the user's input about the error and correct the mistake after the input

Ans : 3

Exp : AWS Cloudformation is an application management tool which provides application modelling, deployment, configuration, management and

related activities. The AWS Cloudformation stack is a collection of AWS resources which are created and managed as a single unit when AWS

CloudFormation instantiates a template. If any of the services fails to launch, Cloudformation will rollback all the changes and terminate or delete

all the created services.

Question : A user is trying to launch an EBS backed EC instance under free usage. The user wants to achieve

encryption of the EBS volume. How can the user encrypt the data at rest?

1. Use AWS EBS encryption to encrypt the data at rest

2. The user cannot use EBS encryption and has to encrypt the data manually or using a third party tool

3. Access Mostly Uused Products by 50000+ Subscribers

4. Encryption of volume is not available as a part of the free usage tier

Ans : 2

Exp : AWS EBS supports encryption of the volume while creating new volumes. It supports encryption of the data at rest, the I/O as well as all the

snapshots of the EBS volume. The EBS supports encryption for the selected instance type and the newer generation instances, such as m3, c3,

cr1, r3, g2. It is not supported with a micro instance.

Question : A user has created a VPC with public and private subnets using the VPC wizard. The user has not launched any instance manually and is trying to

delete the VPC. What will happen in this scenario?

1. It will not allow to delete the VPC as it has subnets with route tables

2. It will not allow to delete the VPC since it has a running route instance

3. Access Mostly Uused Products by 50000+ Subscribers

4. It will not allow to delete the VPC since it has a running NAT instance

Ans : 4

Exp : A Virtual Private Cloud (VPC. is a virtual network dedicated to the user's AWS account. A user can create a subnet with VPC and launch instances

inside that subnet. If the user has created a public private subnet, the instances in the public subnet can receive inbound traffic directly from the

Internet, whereas the instances in the private subnet cannot. If these subnets are created with Wizard, AWS will create a NAT instance with an

elastic IP. If the user is trying to delete the VPC it will not allow as the NAT instance is still running.

Question : An organization is measuring the latency of an application every minute and storing data inside a file in the JSON format. The organization wants

to send all latency data to AWS CloudWatch. How can the organization achieve this?

1. The user has to parse the file before uploading data to CloudWatch

2. It is not possible to upload the custom data to CloudWatch

3. Access Mostly Uused Products by 50000+ Subscribers

4. The user can use the CloudWatch Import command to import data from the file to CloudWatch

Ans : 3

Exp : AWS CloudWatch supports the custom metrics. The user can always capture the custom data and upload the data to CloudWatch using CLI or

APIs. The user has to always include the namespace as part of the request. If the user wants to upload the custom data from a file, he can supply

file name along with the parameter -- metric-data to command put-metric-data.

Question : A user has setup a billing alarm using CloudWatch for $. The usage of AWS exceeded $ after some days. The user wants to increase the

limit from $200 to $400? What should the user do?

1. Create a new alarm of $400 and link it with the first alarm

2. It is not possible to modify the alarm once it has crossed the usage limit

3. Access Mostly Uused Products by 50000+ Subscribers

4. Create a new alarm for the additional $200 amount

Ans : 3

Exp : AWS CloudWatch supports enabling the billing alarm on the total AWS charges. The estimated charges are calculated and sent several times

daily to CloudWatch in the form of metric data. This data will be stored for 14 days. This data also includes the estimated charges for every service

in AWS used by the user, as well as the estimated overall AWS charges. If the user wants to increase the limit, the user can modify the alarm and

specify a new threshold.

Question : A sys admin has created the below mentioned policy and applied to an S object named aws.jpg. The aws.jpg is inside a bucket named

hadoopexam. What does this policy define?

"Statement": [{

"Sid": "Stmt1388811069831",

"Effect": "Allow",

"Principal": { "AWS": "*"},

"Action": [ "s3:GetObjectAcl", "s3:ListBucket", "s3:GetObject"],

"Resource": [ "arn:aws:s3:::hadoopexam/*.jpg"]

}]

1. It is not possible to define a policy at the object level

2. It will make all the objects of the bucket hadoopexam as public

3. Access Mostly Uused Products by 50000+ Subscribers

4. the aws.jpg object as public

Ans : 1

Exp : A system admin can grant permission to the S3 objects or buckets to any user or make objects public using the bucket policy and user policy. Both

use the JSON-based access policy language. Generally if the user is defining the ACL on the bucket, the objects in the bucket do not inherit it and

vice a versa. The bucket policy can be defined at the bucket level which allows the objects as well as the bucket to be public with a single policy

applied to that bucket. It cannot be applied at the object level.

Question : A user is trying to save some cost on the AWS services. Which of the below mentioned options will not help him save cost?

1. Delete the unutilized EBS volumes once the instance is terminated

2. It will make all the objects of the bucket hadoopexam as public

3. Access Mostly Uused Products by 50000+ Subscribers

4. Delete the AWS ELB after the instances are terminated

Ans : 2

Exp : AWS bills the user on a as pay as you go model. AWS will charge the user once the AWS resource is

allocated. Even though the user is not using the resource, AWS will charge if it is in service or allocated. Thus, it is advised that once the user's

work is completed he should:

Terminate the EC2 instance Delete the EBS volumes Release the unutilized Elastic IPs Delete ELB The AutoScaling launch configuration does

not cost the user. Thus, it will not make any difference to the cost whether it is deleted or not.

Question : A user is trying to aggregate all the CloudWatch metric data of the last week. Which of the below mentioned statistics is not available for the user

as a part of data aggregation?

1. Aggregate

2. Sum

3. Access Mostly Uused Products by 50000+ Subscribers

4. Average